By TechnologyAzure and AWS Monitoring

By IndustryIntegrates with your stack

By InitiativeEngineering & DevOps Teams

TechnicalIt’s easy to get the help you need

For quite a while now, cloud-native has been one of the hottest topics in software development. Some developers just call it hype that will lose traction and disappear after some time. For others, it’s the future of software development.

Whatever tomorrow brings, cloud-native is currently one of the biggest trends in the software industry. Additionally, it has already changed the way we think about developing, deploying and operating software products.

But what exactly is cloud native?

Cloud native is a lot more than just signing up with a cloud provider and using it to run your existing applications. Cloud native affects the design, implementation, deployment and operation of your application.

Pivotal, the software company that offers the popular Spring framework and a cloud platform, describes cloud native as:

“Cloud native is an approach to building and running applications that fully exploit the advantages of the cloud computing model.”

The Cloud Native Computing Foundation, an organization that aims to create and drive the adoption of the cloud-native programming paradigm, defines cloud native as:

“Cloud native computing uses an open source software stack to be:

– Containerized. Each part (applications, processes, etc.) is packaged in its own container. This facilitates reproducibility, transparency, and resource isolation.

– Dynamically orchestrated. Containers are actively scheduled and managed to optimize resource utilization.

– Microservices-oriented. Applications are segmented into microservices. This significantly increases the overall agility and maintainability of applications.”

Both definitions are similar, but looking at the topic from a slightly different perspective, you could summarize the definitions as:

“An approach that builds software applications as microservices and runs them on a containerized and dynamically orchestrated platform to utilize the advantages of the cloud computing model.”

Let’s be honest: “Utilize the advantages of the cloud computing model” sounds great. But, if you’re new to cloud-native computing, you’re probably still wondering what this is all about. You may also wonder how it affects the way you implement your software. At least that’s how I felt when I read about cloud-native computing for the first time.

Let’s take a look at the different parts.

The basic idea of containers is to package your software with everything you need to execute it into one executable package, e.g., a Java VM, an application server, and the application itself. You then run this container in a virtualized environment and isolate the contained application from its environment.

The main benefit of this approach is that the application becomes independent of the environment. An added benefit is that the container is highly portable. You can easily run the same container on your development, test or production system. And if your application design supports horizontal scaling, you can start or stop multiple instances of a container to add or remove instances of your application based on the current user demand.

The Docker project is currently the most popular container implementation. It’s so popular that people use the terms Docker and container interchangeably. Keep in mind that the Docker project is just one implementation of the container concept and could be replaced in the future.

If you want to give Docker a try, you should start with the free community edition. You can install Docker on your local desktop. This allows you to start building your own container definitions and deploy your first application into a container. And when you’re done, you can handover the container to a coworker who does quality assurance and deploys it to production afterward.

You no longer need to worry if your application will work in the test or production environment, or if you need to update some dependencies. The container contains everything your application needs, and you just need to start it.

The official documentation shows you how to run Docker on your system and provides a good guide for getting started.

Deploying your application with all dependencies into a container is just the first step. It solves the deployment problems you had previously, but if you want to benefit from a cloud platform fully, you’ll experience new challenges.

Starting additional nodes or shutting down running application nodes based on the current load of your system won’t be easy. You’ll need to:

Doing all of that manually requires a lot of effort. Additionally, it is too slow to react to unexpected changes in system load. You need to have the right tools in place that automatically do all of this for you. Different orchestration solutions are built to automate reactions to unexpected changes with popular options including Docker Swarm, Kubernetes, Apache Mesos and Amazon’s ECS.

Now that we have all the infrastructure and management in place, it’s time to talk about the changes that cloud native introduces to the architecture of your system. Cloud-native applications are built as a system of microservices. I’m sure you’ve heard about that architectural approach already, and I’ve also written a series of posts about it here on this blog.

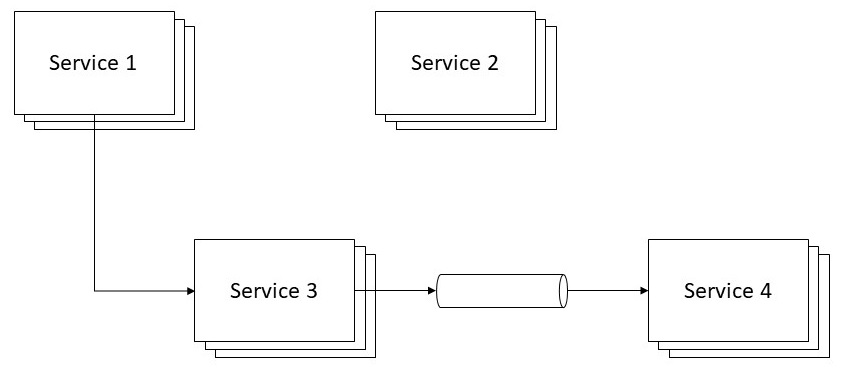

The general idea of this architectural style is to implement a system of multiple, relatively small applications. These are called microservices and work together to provide the overall functionality of your system. Each microservice realizes exactly one functionality, has a well-defined boundary and API and gets developed and operated by a relatively small team.

This approach provides several benefits.

First of all, it’s a lot easier to implement and understand a smaller application that provides one functionality, instead of building a large application that does everything. The approach speeds up development and makes it a lot easier to adapt the service to changed or new requirements. You needn’t worry too much about unexpected side effects of a seemingly small change. Additionally, it allows you to focus on the development task at hand.

Microservices also allow you to scale more efficiently. When I talked about containers, I said that you could simply start another container to handle an increase in user requests. We call this horizontal scaling. You can basically do the same with every stateless application, independent of its size. As long as the application doesn’t keep any state, you can send the next request of a user to any available application instance.

Even though you can scale similarly with a monolithic application or a system of microservices, it’s often a lot cheaper to scale a system of microservices. You just need to scale the microservice that gets a lot of load. As long as the rest of the system can handle the current load, you don’t need to add any additional instances of the other services.

Scaling is entirely different with a monolithic application. If you need to increase the capacity of one feature, you need to start a new instance of the complete monolith. That might not seem like a big deal, but in a cloud environment, you pay for the usage of hardware resources. Even if you only use a small part of the monolith, you still need to acquire additional resources for the other, unused parts.

Microservices, as you can see, let you use the cloud resources more efficiently and reduce the monthly bill of your cloud provider.

As always, you don’t get the benefits of an architectural style for free. Microservices remove some complexity from the services themselves and provide better scalability, but you’re now building a distributed system. That adds a lot more complexity on the system level.

To minimize this additional complexity, try to avoid any dependencies between your microservices. If that’s impossible, you need to make sure that dependent services find each other and implement their communication efficiently. You also need to handle slow or unavailable services so that they don’t affect the complete system. I go into more detail about the communication between microservices in Communication Between Microservices: How to Avoid Common Problems.

The distributed nature of your system also makes it a lot harder to monitor and manage your system in production. Instead of a few monoliths, you now need to monitor a system of microservices, and for each service, there might be several instances that run in parallel. When you need to monitor additional application instances, use a tool like Retrace to collect the information from all systems.

You don’t need to use a specific framework or technology stack to build a microservice, but it makes it a lot easier. Specific frameworks and technology stacks provide lots of ready-to-use features that are well tested and can be used in production environments.

In the Java world, there are lots of different options available, including the popular Spring Boot and Eclipse Microprofile.

As you might guess from its name, Spring Boot integrates the well-known Spring framework with several other frameworks and libraries to handle the additional challenges of the microservice architecture.

Eclipse Microprofile follows the same idea, but uses Java EE. Multiple Java EE application server vendors work together to provide a set of specifications and multiple, interchangeable implementations.

The ideas and concepts of cloud-native computing introduced a new way to implement complex, scalable systems. Even if you’re not hosting your application on a cloud platform, these new ideas will influence how you develop applications in the future.

Containers make it a lot easier to distribute an application. Use containers during your development process to share applications between team members or to run applications in different environments. After all tests are executed, you can easily deploy the same container to production.

Microservices provide a new way to structure your system, yet introduce new challenges and shift your attention to the design of each component. Microservices improve encapsulation and allow you to implement maintainable components that you can quickly adapt to new requirements.

If you decide to use containers to run a system of microservices in production, you’ll need an orchestration solution that helps you to manage the system.

Whether a fad or not, cloud native offers flexible solutions for software products depending on the project requirements. In fact, microservices are used by many companies to implement their software products.

When you employ cloud services, having a tool like Retrace will help you monitor performance. Retrace is a code-level APM tool capable of managing and monitoring your app’s performance throughout the software development lifecycle and offers other valuable features, such as log management, error tracking and application performance metrics. Get your FREE 14-DAY TRIAL today.

Stackify's APM tools are used by thousands of .NET, Java, PHP, Node.js, Python, & Ruby developers all over the world.

Explore Retrace's product features to learn more.

If you would like to be a guest contributor to the Stackify blog please reach out to stackify@stackify.com