By TechnologyAzure and AWS Monitoring

By IndustryIntegrates with your stack

By InitiativeEngineering & DevOps Teams

TechnicalIt’s easy to get the help you need

Docker has changed the way we build, package, and deploy applications. But this concept of packaging apps in containers isn’t new—it was in existence long before Docker.

Docker just made container technology easy for people to use. This is why Docker is a must-have in most development workflows today. Most likely, your dream company is using Docker right now.

Docker’s official documentation has a lot of moving parts. Honestly, it can be overwhelming at first. You could find yourself needing to glean information here and there to build that Docker image you’ve always wanted to build.

Maybe building Docker images has been a daunting task for you, but it won’t be after you read this post. Here, you’ll learn how to build—and how not to build—Docker images. You’ll be able to write a Dockerfile and publish Docker images like a pro.

First, you’ll need to install Docker. Docker runs natively on Linux. That doesn’t mean you can’t use Docker on Mac or Windows. In fact, there’s Docker for Mac and Docker for Windows. I won’t go into details on how to install Docker on your machine in this post. If you’re on a Linux machine, this guide will help you get Docker up and running.

Now that you have Docker set up on your machine, you’re one step closer to building images with Docker. Most likely, you’ll come across two terms — ”containers” and “images”—that can be confusing.

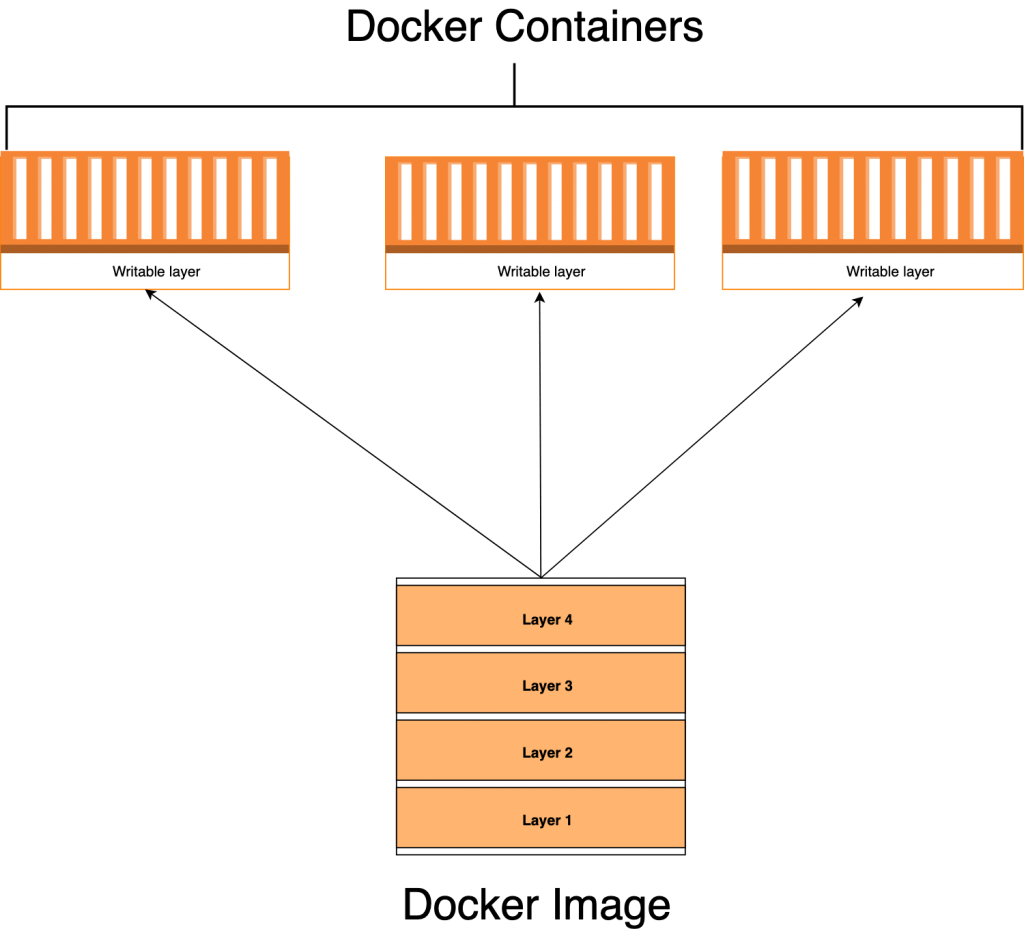

Docker containers are runtime instances of Docker images, whether running or stopped. In fact, one of the major differences between Docker containers and images is that containers have a writable layer and it’s the container that runs your software. You can think of a Docker image as the blueprint of a Docker container.

When you create a Docker container, you’re adding a writable layer on top of the Docker image. You can run many Docker containers from the same Docker image. You can see a Docker container as a runtime instance of a Docker image.

It’s time to get our hands dirty and see how Docker build works in a real-life app. We’ll generate a simple Node.js app with an Express app generator. Express generator is a CLI tool used for scaffolding Express applications. After that, we’ll go through the process of using Docker build to create a Docker image from the source code.

We start by installing the express generator as follows:

$ npm install express-generator -g

Next, we scaffold our application using the following command:

$ express docker-app

Now we install package dependencies:

$ npm install

Start the application with the command below:

$ npm start

If you point your browser to http://localhost:3000, you should see the application default page, with the text “Welcome to Express.”

Mind you, the application is still running on your machine, and you don’t have a Docker image yet. Of course, there are no magic wands you can wave at your app and turn it into a Docker container all of a sudden. You’ve got to write a Dockerfile and build an image out of it.

Docker’s official docs define Dockerfile as “a text document that contains all the commands a user could call on the command line to assemble an image.” Now that you know what a Dockerfile is, it’s time to write one.

Docker builds images by reading instructions in dockerfiles. A docker instruction has two components: instruction and argument.

A docker instruction can be written as :

RUN npm install

“RUN” in the instruction and “npm install” is the argument. There are many docker instructions but below are some of the docker instructions you will come across often and the explanation. Mind you, we’ll use some of them in this post.

| Dockerfile Instruction | Explanation |

| FROM | We use “FROM” to specify the base image we want to start from. |

| RUN | RUN is used to run commands during the image build process. |

| ENV | Sets environment variables within the image, making them accessible both during the build process and while the container is running. If you only need to define build-time variables, you should utilize the ARG instruction. |

| COPY | The COPY command is used to copy a file or folder from the host system into the docker image. |

| EXPOSE | Used to specify the port you want the docker image to listen to at runtime. |

| ADD | An advanced form of COPY instruction. You can copy files from the host system into the docker image. You can also use it to copy files from a URL into a destination in the docker image. In fact, you can use it to copy a tarball from the host system and automatically have it extracted into a destination in the docker image. |

| WORKDIR | It’s used to set the current working directory. |

| VOLUME | It is used to create or mount the volume to the Docker container |

| USER | Sets the user name and UID when running the container. You can use this instruction to set a non-root user of the container. |

| LABEL | Specify metadata information of Docker image |

| ARG | Defines build-time variables using key-value pairs. However, these ARG variables will not be accessible when the container is running. To maintain a variable within a running container, use ENV instruction instead. |

| CMD | Executes a command within a running container. Only one CMD instruction is allowed, and if multiple are present, only the last one takes effect. |

| ENTRYPOINT | Specifies the commands that will execute when the Docker container starts. If you don’t specify any ENTRYPOINT, it defaults to “/bin/sh -c”. |

Enough of all the talk. It’s time to create docker instructions we need for this project. At the root directory of your application, create a file with the name “Dockerfile.”

$ touch Dockerfile

There’s an important concept you need to internalize—always keep your Docker image as lean as possible. This means packaging only what your applications need to run. Please don’t do otherwise.

In reality, source code usually contains other files and directories like .git, .idea, .vscode, or ci.yml. Those are essential for our development workflow, but won’t stop our app from running. It’s a best practice not to have them in your image—that’s what .dockerignore is for. We use it to prevent such files and directories from making their way into our build.

Create a file with the name .dockerignore at the root folder with this content:

.git .gitignore node_modules npm-debug.log Dockerfile* docker-compose* README.md LICENSE .vscode

Dockerfile usually starts from a base image. As defined in the [Docker documentation](https://docs.docker.com/engine/reference/builder/), a base image or parent image is where your image is based. It’s your starting point. It could be an Ubuntu OS, Redhat, MySQL, Redis, etc.

Base images don’t just fall from the sky. They’re created—and you too can create one from scratch. There are also many base images out there that you can use, so you don’t need to create one in most cases.

We add the base image to Dockerfile using the FROM command, followed by the base image name:

# Filename: Dockerfile FROM node:18-alpine

Let’s instruct Docker to copy our source during Docker build:

# Filename: Dockerfile FROM node:18-alpine WORKDIR /usr/src/app COPY package*.json ./ RUN npm install COPY . .

First, we set the working directory using WORKDIR. We then copy files using the COPY command. The first argument is the source path, and the second is the destination path on the image file system. We copy package.json and install our project dependencies using npm install. This will create the node_modules directory that we once ignored in .dockerignore.

You might be wondering why we copied package.json before the source code. Docker images are made up of layers. They’re created based on the output generated from each command. Since the file package.json does not change often as our source code, we don’t want to keep rebuilding node_modules each time we run Docker build.

Copying over files that define our app dependencies and install them immediately enables us to take advantage of the Docker cache. The main benefit here is quicker build time. There’s a really nice blog post that explains this concept in detail.

Exposing port 3000 informs Docker which port the container is listening on at runtime. Let’s modify the Docker file and expose the port 3000.

# Filename: Dockerfile FROM node:18-alpine WORKDIR /usr/src/app COPY package*.json ./ RUN npm install COPY . . EXPOSE 3000

The CMD command tells Docker how to run the application we packaged in the image. The CMD follows the format CMD [“command”, “argument1”, “argument2”].

# Filename: Dockerfile FROM node:18-alpine WORKDIR /usr/src/app COPY package*.json ./ RUN npm install COPY . . EXPOSE 3000 CMD ["npm", "start"]

With Dockerfile written, you can build the image using the following command:

$ docker build .

We can see the image we just built using the command docker images.

$ docker images

If you run the command above, you will see something similar to the output below.

REPOSITORY TAG IMAGE ID CREATED SIZE 7b341adb0bf1 2 minutes ago 83.2MB

When you have many images, it becomes difficult to know which image is what. Docker provides a way to tag your images with friendly names of your choosing. This is known as tagging. Let’s proceed to tag the Docker image we just built. Run the command below:

$ docker build . -t yourusername/example-node-app

If you run the command above, you should have your image tagged already. Running docker images again will show your image with the name you’ve chosen.

$ docker images

The output of the above command should be similar to this:

REPOSITORY TAG IMAGE ID CREATED SIZE yourusername/example-node-app latest be083a8e3159 7 minutes ago 83.2MB

You run a Docker image by using the docker run API. The command is as follows:

$ docker run -p80:3000 yourusername/example-node-app

The command is pretty simple. We supplied -p argument to specify what port on the host machine to map the port the app is listening on in the container. Now you can access your app from your browser on https://localhost.

To run the container in a detached mode, you can supply argument -d:

$ docker run -d -p80:3000 yourusername/example-node-app

A big congrats to you! You just packaged an application that can run anywhere Docker is installed.

The Docker image you built still resides on your local machine. This means you can’t run it on any other machine outside your own—not even in production! To make the Docker image available for use elsewhere, you need to push it to a Docker registry.

A Docker registry is where Docker images live. One of the popular Docker registries is Docker Hub. You’ll need an account to push Docker images to Docker Hub, and you can create one [here.](https://hub.docker.com/)

With your [Docker Hub](https://hub.docker.com/) credentials ready, you need only to log in with your username and password.

$ docker login

Enter your Docker Hub username and docker hub token or password to authenticate

Retag the image with a version number:

$ docker tag yourusername/example-node-app yourdockerhubusername/example-node-app:v1

Then push with the following:

$ docker push yourusername/example-node-app:v1

If you’re as excited as I am, you’ll probably want to poke your nose into what’s happening in this container, and even do cool stuff with Docker API.

You can list Docker containers:

$ docker ps

And you can inspect a container:

$ docker inspect

You can view Docker logs in a Docker container:

$ docker logs

And you can stop a running container:

$ docker stop

Logging and monitoring are as important as the app itself. You shouldn’t put an app in production without proper logging and monitoring in place, no matter what the reason. Retrace provides first-class support for Docker containers. This guide can help you set up a Retrace agent.

The whole concept of containerization is all about taking away the pain of building, shipping, and running applications. In this post, we’ve learned how to write Dockerfile as well as build, tag, and publish Docker images. Now it’s time to build on this knowledge and learn about how to automate the entire process using continuous integration and delivery. Here are a few good posts about setting up continuous integration and delivery pipelines to get you started:

Stackify's APM tools are used by thousands of .NET, Java, PHP, Node.js, Python, & Ruby developers all over the world.

Explore Retrace's product features to learn more.

If you would like to be a guest contributor to the Stackify blog please reach out to stackify@stackify.com